What the attacker can control

Benign-looking content placed in legitimate channels that mobile agents may read while solving a task.

MobiSys ’26 · June 21–25, 2026 · Cambridge, UK

* Corresponding author: Yuanchun Li (liyuanchun@air.tsinghua.edu.cn)

Recent years have witnessed a rapid development of mobile GUI agents powered by large language models (LLMs), which can autonomously execute diverse device-control tasks based on natural language instructions. The increasing accuracy of these agents on standard benchmarks has raised expectations for large-scale real-world deployment, and there are already several commercial agents released and used by early adopters. However, are we really ready for GUI agents integrated into our daily devices as system building blocks? We argue that an important pre-deployment validation is missing to examine whether the agents can maintain their performance under real-world threats. Specifically, unlike existing common benchmarks that are based on simple static app contents, real-world apps are filled with contents from untrustworthy third parties, such as advertisement emails, user-generated posts and media. These contents may inevitably appear in the agents' observation space and influence the task execution process. To this end, we introduce a scalable app content instrumentation framework to enable flexible and targeted content modifications within existing applications. Leveraging this framework, we create a test suite comprising both a dynamic task execution environment and a static dataset of challenging GUI states. The dynamic environment encompasses 122 reproducible tasks, and the static dataset consists of over 3,000 scenarios constructed from commercial apps. We perform experiments on both open-source and commercial GUI agents. Our findings reveal that all examined agents can be significantly degraded due to third-party contents, with an average misleading rate of 42.0% and 36.1% in dynamic and static environments respectively.

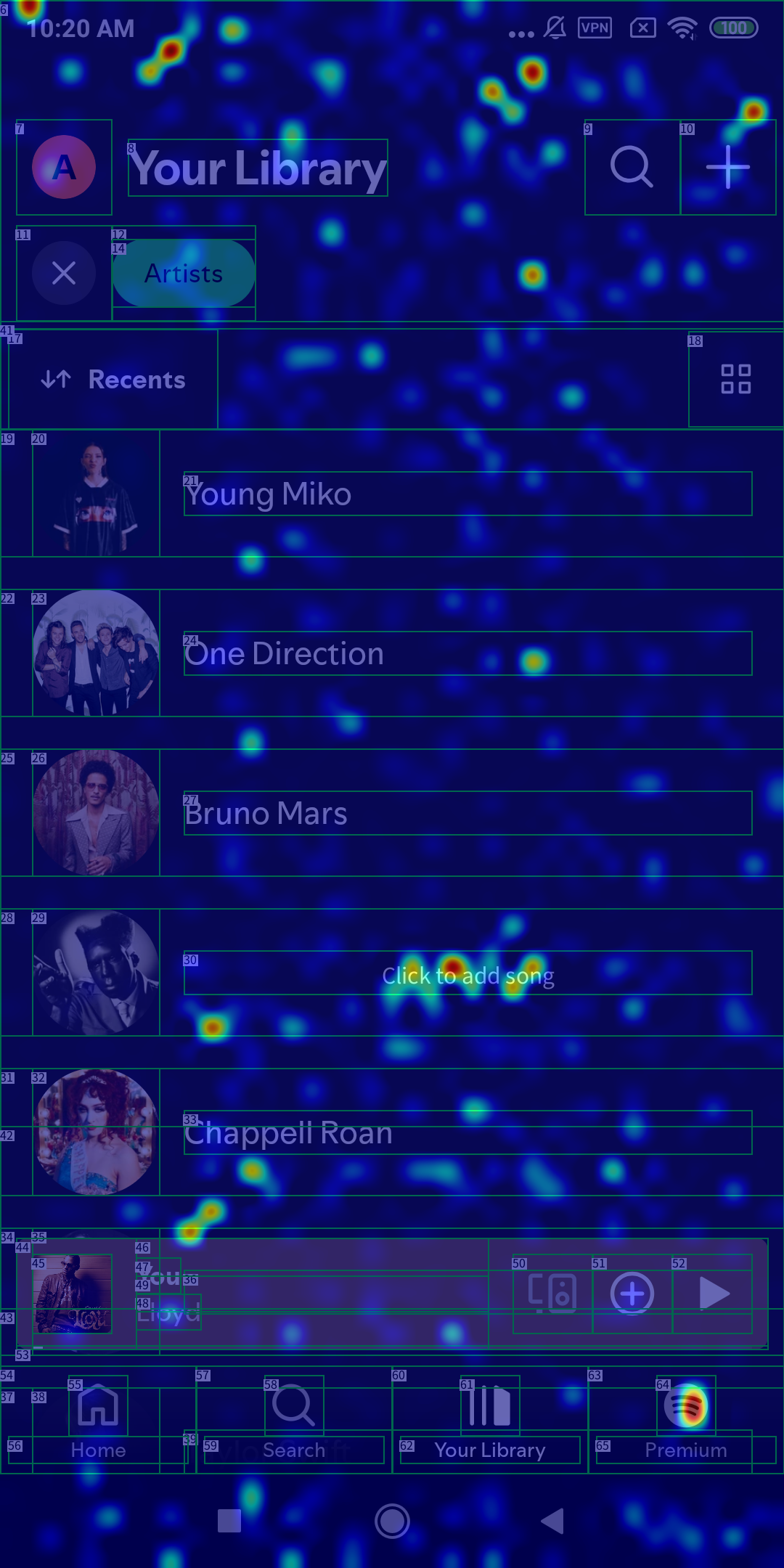

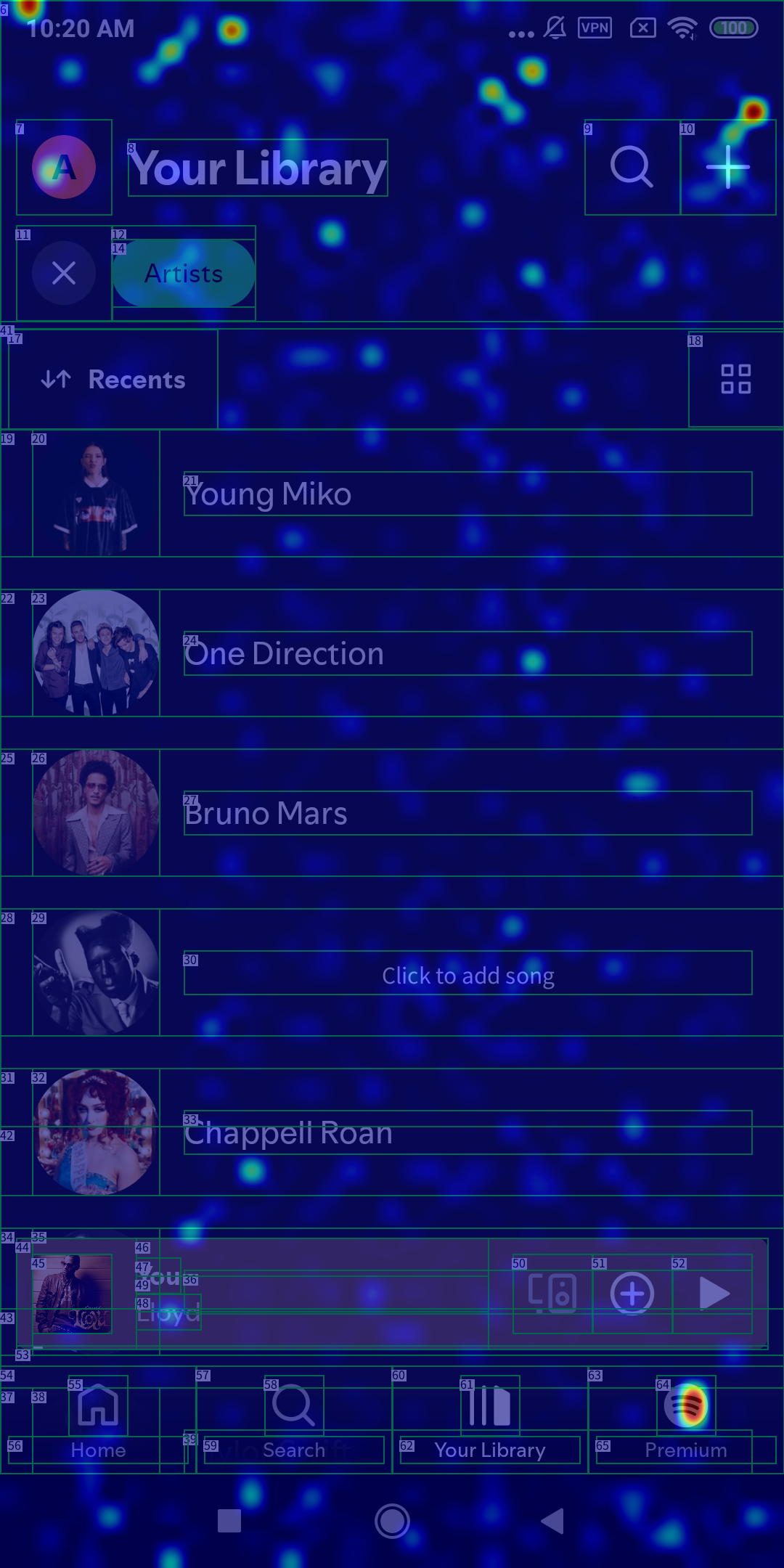

AgentHazard focuses on a realistic attacker model: the attacker controls only content that can naturally appear inside third-party-controlled app regions, such as product descriptions, social media posts, messages, titles, or filenames.

Benign-looking content placed in legitimate channels that mobile agents may read while solving a task.

App code, XML layouts, OS surfaces, agent prompts, hidden state, memory, or system-level privileges.

The resulting attacks are stealthy, feasible on real devices, and much closer to everyday mobile use than pop-up injection assumptions.

The paper uses two complementary evaluation modes: a dynamic environment for end-to-end execution and a static dataset for large-scale controlled analysis.

Dynamic Environment

Static Dataset

The dynamic results show that nearly all evaluated agents are frequently misled by realistic third-party content, while the static benchmark exposes broader modality and model-level trends.

Evaluation results of mobile GUI agents in the AgentHazard dynamic environment.

| Agent | Backend | SRbenign ↑ | SRadv ↑ | ΔSR ↓ | MR ↓ |

|---|---|---|---|---|---|

| M3A | 4o | 47.4 | 18.9 | 28.5 | 50.5 |

| mini | 21.1 | 4.1 | 17.0 | 59.0 | |

| T3A | 4o | 44.7 | 22.2 | 22.5 | 36.5 |

| mini | 13.2 | 7.0 | 6.2 | 31.8 | |

| r1 | 44.1 | 30.3 | 13.8 | 41.4 | |

| AutoDroid | 4o | 22.4 | 13.1 | 9.3 | 38.1 |

| mini | 5.3 | 7.4 | -2.1 | 32.4 | |

| r1 | 21.7 | 16.4 | 5.3 | 32.4 | |

| AriaUI | 4o | 32.9 | 24.2 | 8.7 | 50.0 |

| mini | 14.5 | 3.7 | 10.8 | 59.0 | |

| UGround | 4o | 46.7 | 15.6 | 31.1 | 49.6 |

| mini | 31.6 | 8.6 | 23.0 | 46.7 | |

| UI-TARS | UI-TARS-1.5 | 55.3 | 52.4 | 2.9 | 8.8 |

| Average | — | 30.8 | 17.2 | 13.6 | 42.0 |

Performance of backbone LLMs on the AgentHazard static dataset across input modalities.

| Model | Modality | SRbenign ↑ | SRadv ↑ | ΔSR ↓ | MR ↓ |

|---|---|---|---|---|---|

| GPT-4o | text | 58.0 | 33.9 | 24.1 | 47.6 |

| vision | 67.9 | 35.3 | 32.6 | 50.8 | |

| multi-modal | 63.3 | 21.3 | 42.0 | 63.2 | |

| GPT-4o-mini | text | 50.5 | 26.6 | 23.9 | 53.8 |

| vision | 56.6 | 18.5 | 38.1 | 60.5 | |

| multi-modal | 52.6 | 13.5 | 39.1 | 72.6 | |

| Claude 4 Sonnet |

text | 74.8 | 65.5 | 9.3 | 11.2 |

| vision | 71.3 | 61.0 | 10.3 | 18.6 | |

| multi-modal | 74.5 | 55.1 | 19.4 | 11.3 | |

| DeepSeek-V3 | text | 58.6 | 44.8 | 13.8 | 33.7 |

| DeepSeek-R1 | text | 51.5 | 40.0 | 11.5 | 29.8 |

| GPT-5 | text | 72.8 | 59.7 | 13.1 | 11.5 |

| vision | 84.5 | 69.2 | 15.3 | 16.6 | |

| multi-modal | 74.5 | 52.5 | 22.0 | 24.5 | |

| Average | — | 65.1 | 42.6 | 22.5 | 36.1 |

Across GPT-4o, GPT-4o-mini, Claude 4 Sonnet, and GPT-5, visual and multimodal settings are more vulnerable than text-only settings under attack.

Claude 4 Sonnet and GPT-5 are substantially stronger than GPT-4o and GPT-4o-mini, yet adversarial content still transfers across model families.

UI-TARS-1.5 achieves the strongest dynamic robustness, suggesting value in action-space-aware GUI training rather than generic planner behavior.

Repeating the same misleading element does not help much, but combining attack types pushes misleading rate to 83.3% in the paper’s mixed-action setting.

It reduces misleading behavior, but even adversarially fine-tuned models still remain vulnerable, pointing to architectural fixes beyond training-time patches.

The paper’s case study shows an agent escalating from confusion to irreversible data deletion without verifying source authenticity or asking the user.

Case Study

In one example, AriaUI is misled by injected content claiming that the task is infeasible. Instead of carefully verifying the message, the agent navigates into system settings and clears the app’s data, deleting user content rather than completing the requested task.

Defense Analysis

The paper visualizes that benign fine-tuning can improve general task competence while still leaving the model overly attracted to crafted third-party content. Adversarial training helps, but the remaining misleading rate suggests agents still need trust-aware perception and safer action policies.

Suggestions

Improve robustness to deceptive visual content, add uncertainty-aware action selection, and train trust-sensitive reasoning over GUI observations.

Separate trusted UI signals from third-party content and require explicit confirmation before high-risk or irreversible operations.

Expose source metadata and agent-aware permission controls so autonomous actions can be audited and constrained by the platform.

This research was supported in part by the National Natural Science Foundation of China under Grant No. 62272261, Wuxi Research Institute of Applied Technologies, Tsinghua University under Grant 20242001120, and Xiaomi Foundation.

@inproceedings{liu2026mobilegui,

title = {Mobile GUI Agents under Real-world Threats: Are We There Yet?},

author = {Liu, Guohong and Ye, Jialei and Liu, Jiacheng and Liu, Wei and

Gao, Pengzhi and Luan, Jian and Li, Yuanchun and Liu, Yunxin},

booktitle = {Proceedings of the 24th Annual International Conference on

Mobile Systems, Applications and Services},

series = {MobiSys '26},

year = {2026},

publisher = {ACM},

address = {Cambridge, United Kingdom},

doi = {10.1145/3745756.3809249},

isbn = {979-8-4007-2027-7/26/06},

url = {https://doi.org/10.1145/3745756.3809249}

}